OpenAI is all the rage now and developers are rushing to leverage this technology in their apps and SaaS products. But such is the demand for this stuff, you might need to think about how you protect yourself from abuse - here's a post from a colleague I saw on LinkedIn recently:

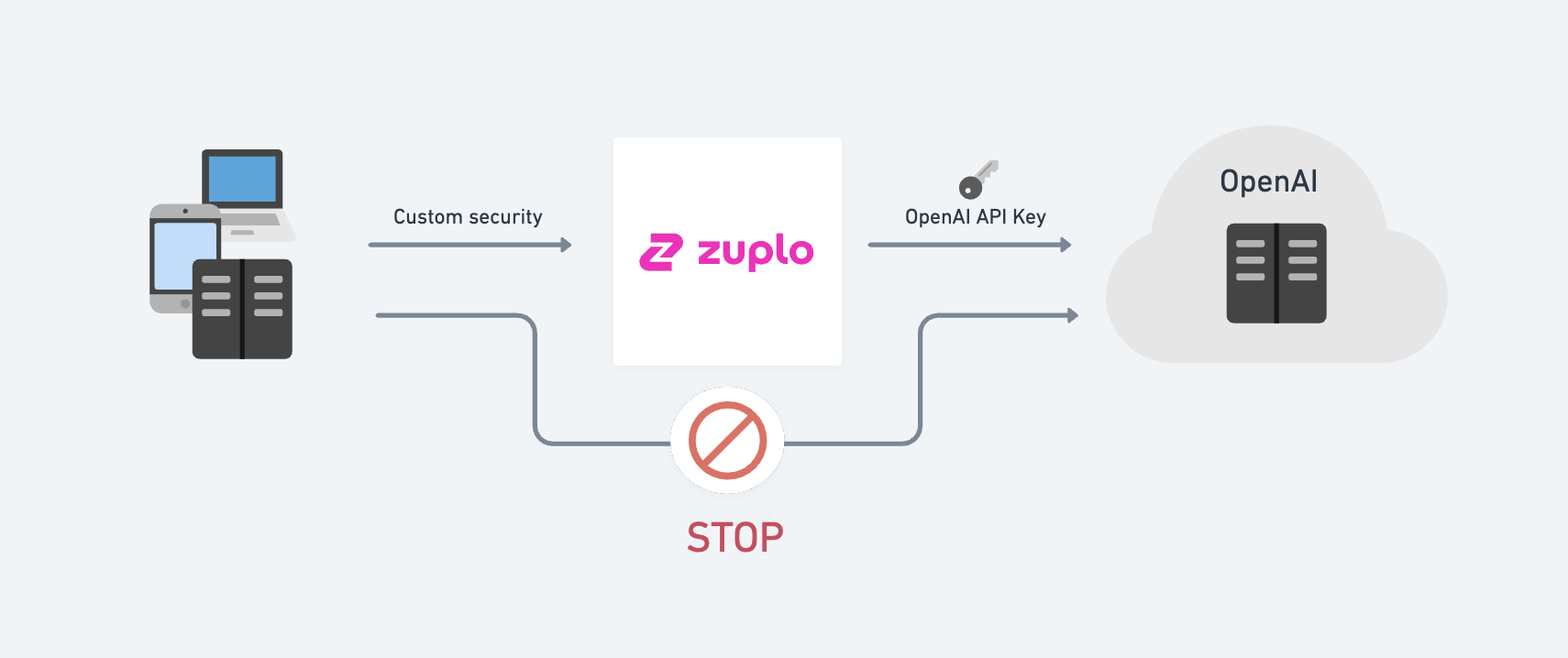

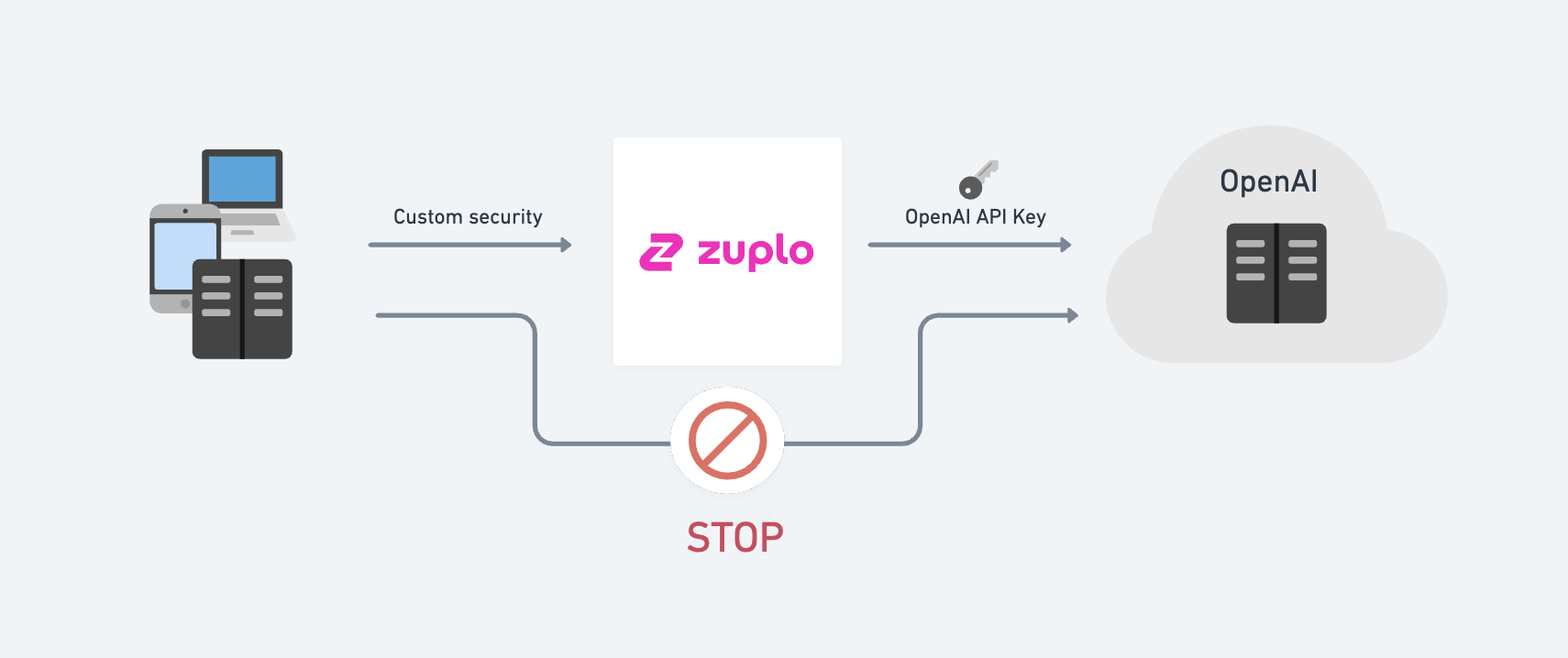

You can use a Zuplo gateway to store your API keys and enforce a number of layers of protection to discourage abuse of your OpenAI API keys.

How it works

Zuplo allows you to easily perform authentication translation, that is, change the authentication method your clients use. For example you might require your clients to use

- JWT tokens

- API Keys issued to each of your customers (different to your OpenAI key, so you can identify each individual customer)

- Anonymously in a web browser — but ensure the call is coming from the right origin, enforce CORS and rate-limit by IP etc.

Setting up Zuplo to send the API Key

This is a CURL command to call the OpenAI API directly, note the need for an API KEY

curl https://api.openai.com/v1/completions \

-H 'Content-Type: application/json' \

-H 'Authorization: Bearer YOUR_API_KEY_HERE' \

-d '{

"model": "babbage",

"prompt": "Say this is a test",

"max_tokens": 7,

"temperature": 0

}'

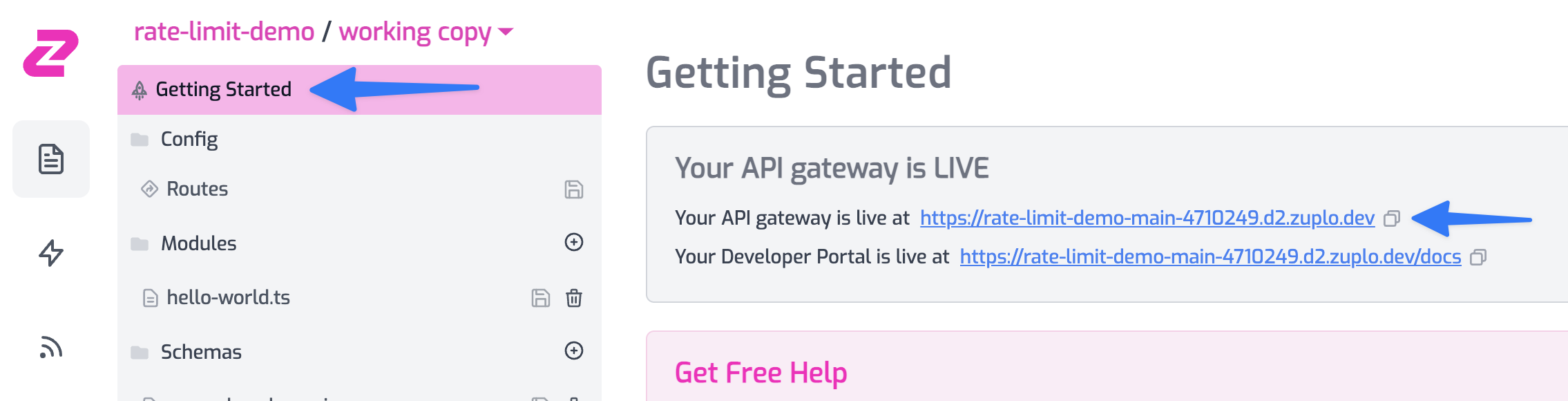

To get started we'll create a simple Zuplo gateway that removes the need to specify the API key.

Create a new project and add a route:

- Summary:

My OpenAI Completions - Path:

/v1/my-completions - Method:

POST - CORS:

No CORS - Handler: URL Rewrite -

https://api.openai.com/v1/completions

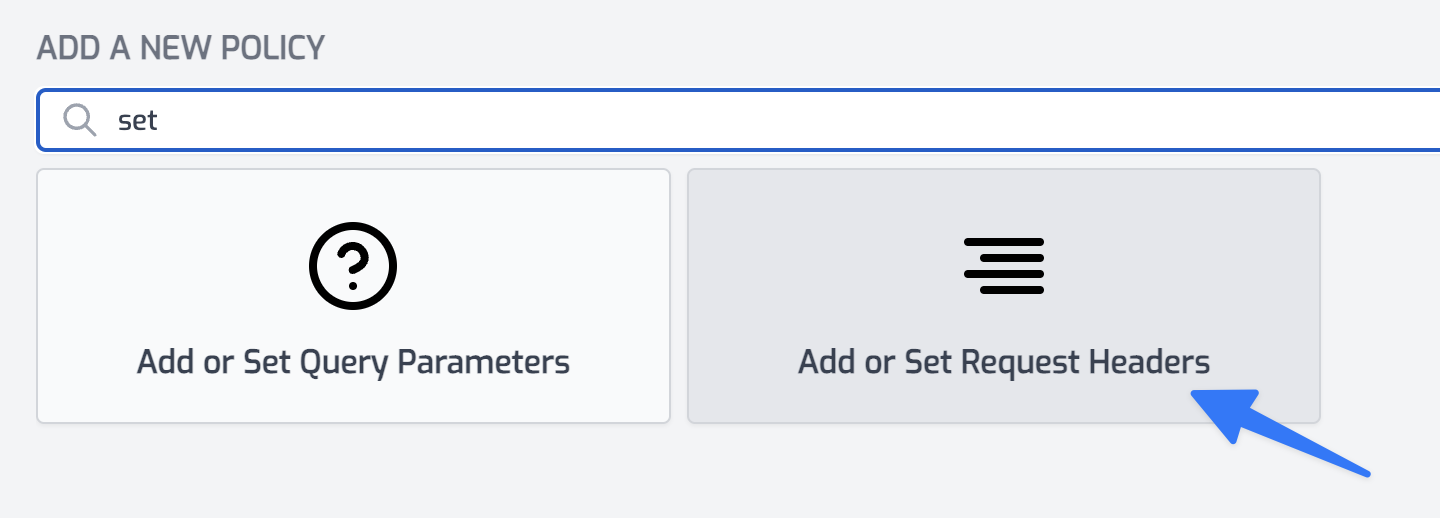

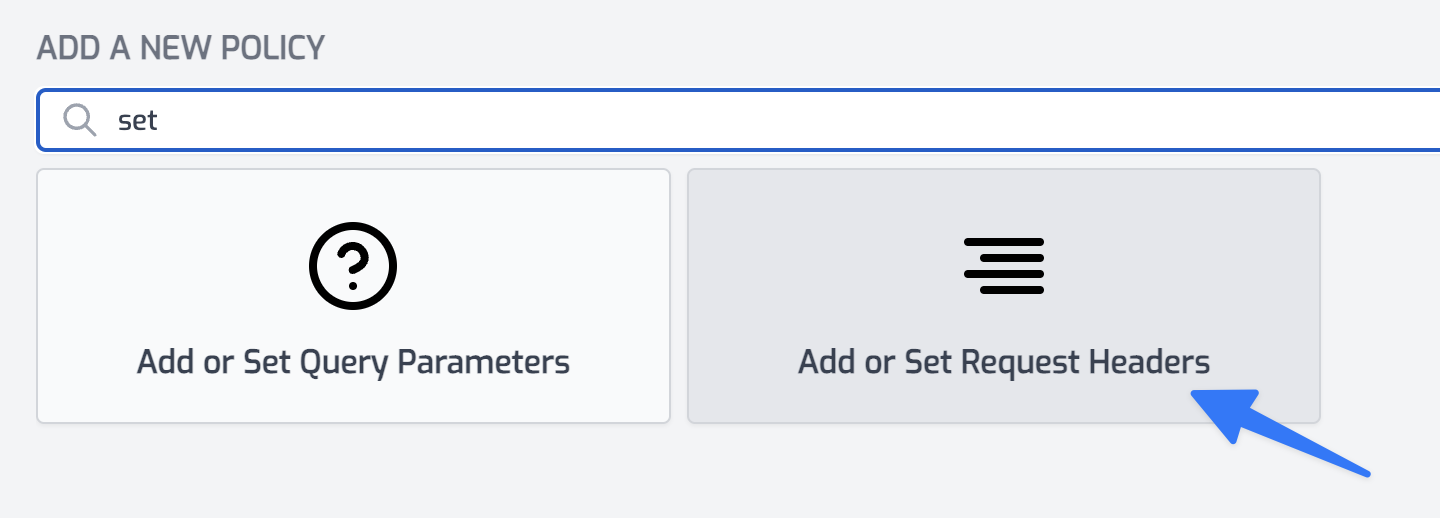

Next, we need to add a policy that will set the authorization header when calling OpenAI. Open the Policies section and click Add Policy.

Choose the Add or Set Request Headers policy. Set the policy configuration as follows

{

"export": "SetHeadersInboundPolicy",

"module": "$import(@zuplo/runtime)",

"options": {

"headers": [

{

"name": "authorization",

"value": "Bearer $env(OPEN_AI_API_KEY)",

"overwrite": true

}

]

}

}

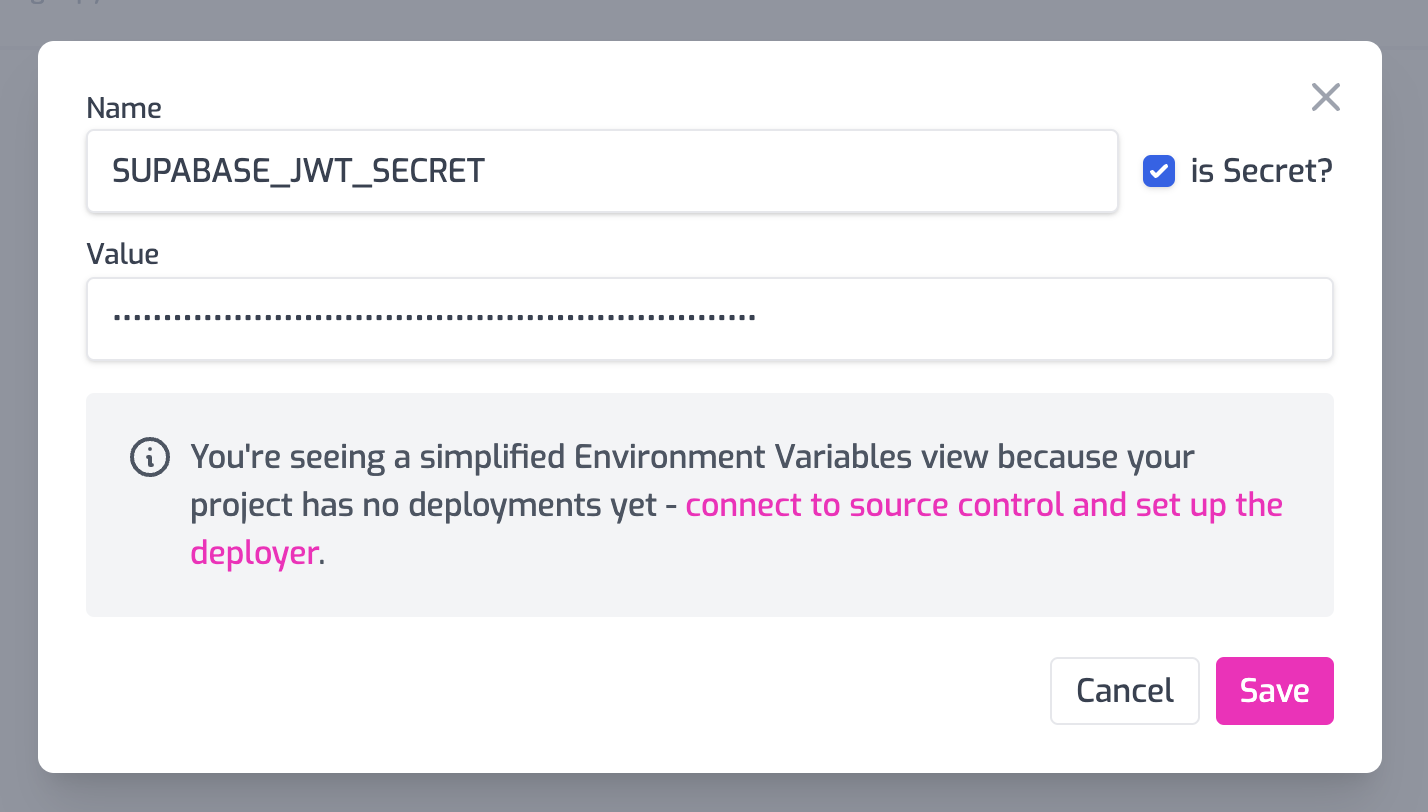

Note that we will read the API Key from a secure secret stored as an environment variable - go setup your OPEN_AI_API_KEY env var.

Save your changes, and we're ready.

Take the above curl command and remove the authorization header and change the URL to your project URL:

curl https://open-ai-main-298dc8d.d2.zuplo.dev/v1/my-completions \

-H 'Content-Type: application/json' \

-d '{

"model": "babbage",

"prompt": "Say this is a test",

"max_tokens": 7,

"temperature": 0

}'

Look no API key 👏 - but your request should work fine as Zuplo will add the key on the way out.

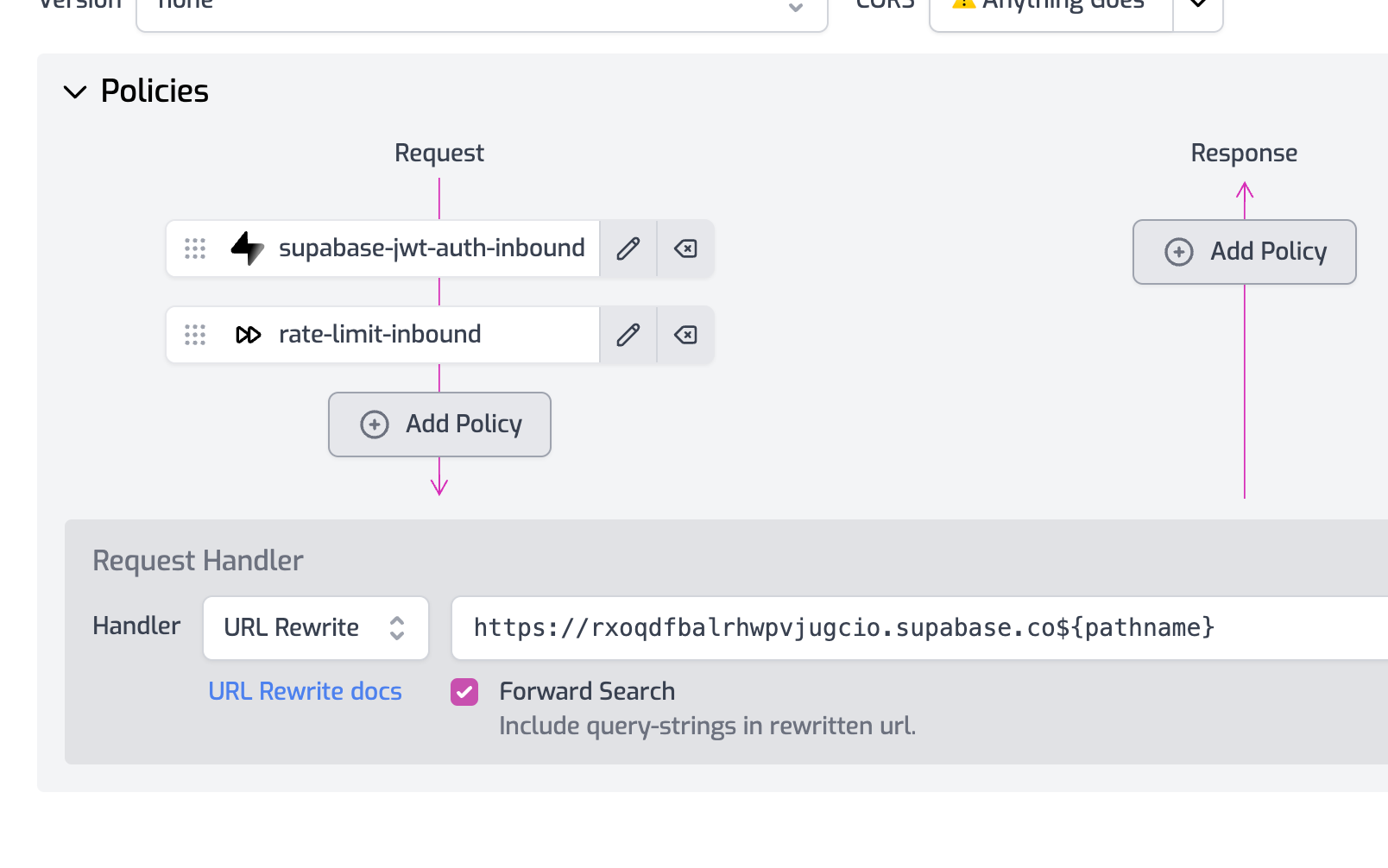

Securing Zuplo

You now have several choices to secure Zuplo.

- Require your users to login (with a service like Auth0) and then use an Auth0 JWT with Zuplo.

- Issue API Keys to all your users using Zuplo's API Key Service.

- Host anonymously but add additional safe guards, including requiring a specific Origin and strict CORS using custom CORS policies.

Make sure to add rate limiting - based on user or maybe IP (for anonymous use case).

Event Streaming (data-only server-sent events)

OpenAI supports event streaming, this is easy to get working with Zuplo and works out of the box. You can try this by adding a stream: true property to your POST to OpenAI:

curl https://open-ai-main-298dc8d.d2.zuplo.dev/v1/my-completions \

-H 'Content-Type: application/json' \

-d '{

"model": "babbage",

"prompt": "Say this is a test",

"max_tokens": 7,

"temperature": 0,

"stream": true

}'

However, what if you want to support EventSource in the browser? That is easy to accomplish with Zuplo also by taking the incoming GET request created by EventSource and translating it into a POST request, with the appropriate headers and body inside Zuplo.

Event streaming doesn't work fully on 'working-copy' but works great on your

'edge deployments'. Read more about environments to promote to an edge deployment.

Create a new route:

- Summary:

My OpenAI Completions for Browser Event Source - Path:

/v1/my-browser-completions - Method:

GET - CORS:

Anything Goes - Handler: URL Rewrite -

https://api.openai.com/v1/completions

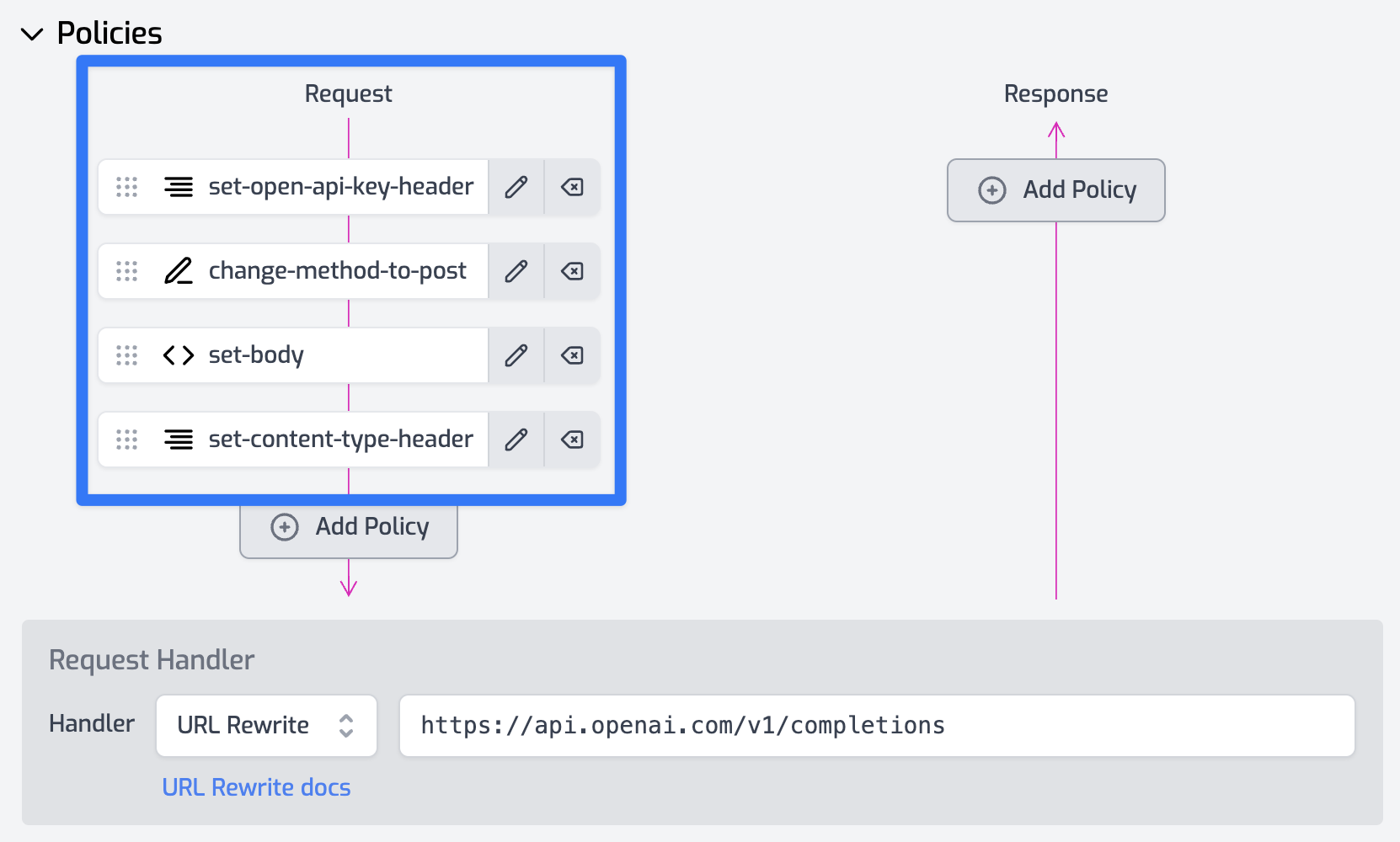

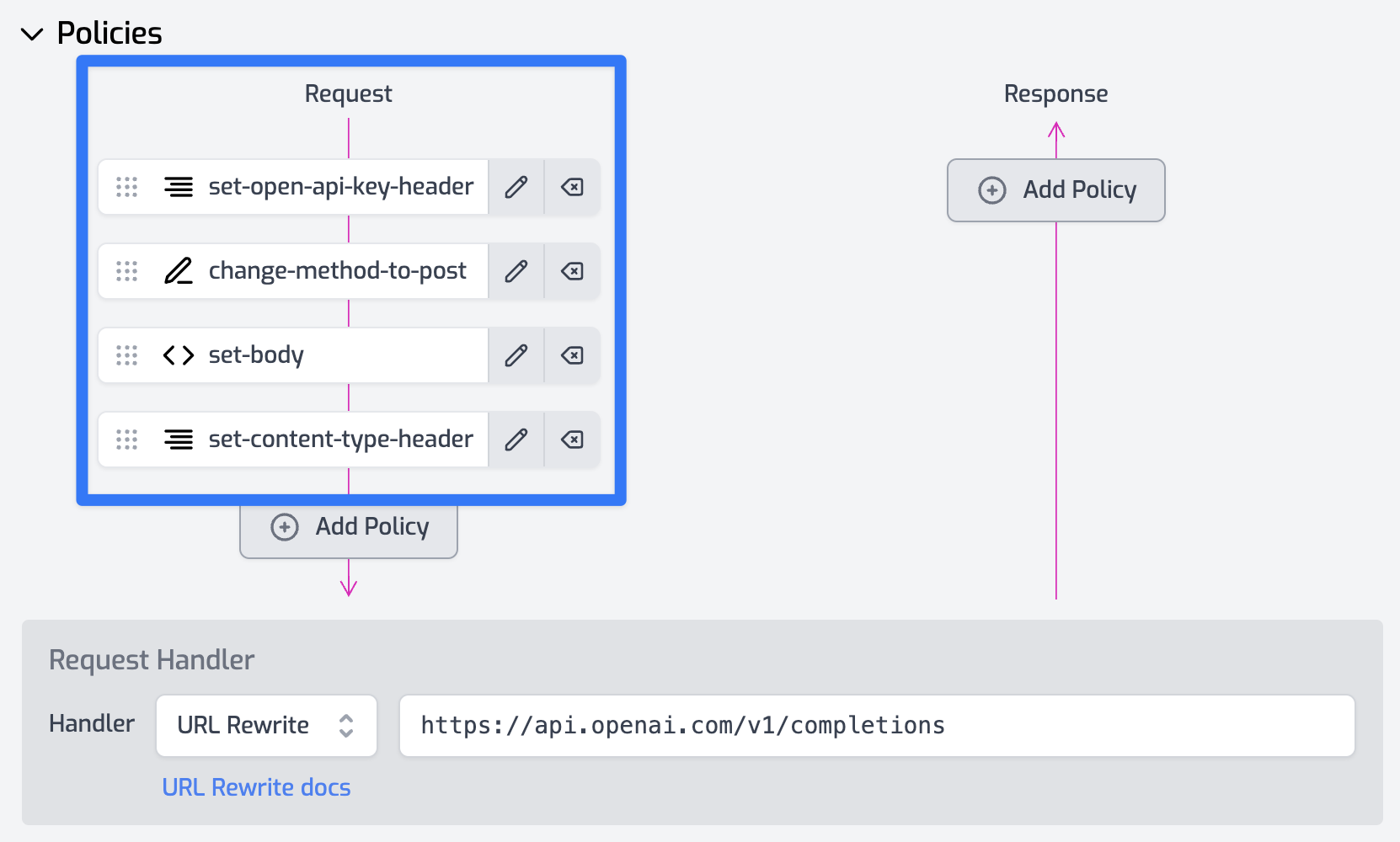

Add the following policies

- Reuse your Set Header policy that sets the authorization key above.

- Add a Change Method policy to update the request to be a

POST - Add another Set Header policy to set the

content-type header to application/json - Finally, add a Set Body policy with the following configuration.

{

"export": "SetBodyInboundPolicy",

"module": "$import(@zuplo/runtime)",

"options": {

"body": "{ \"model\": \"babbage\", \"prompt\": \"Say this is a test\", \"max_tokens\": 7, \"temperature\": 0, \"stream\": true }"

}

}

You can now use an EventSource in a browser and call Zuplo as follows

const evtSource = new EventSource(

"open-ai-main-298dc8d.d2.zuplo.dev/v1/my-browser-completions"

);

evtSource.onmessage = (evt) => {

if (evt.data === "[DONE]") {

console.log("end of event stream...");

return;

}

console.log(JSON.parse(evt.data));

};

You could also make the POST body dynamic, based on a querystring in the EventSource - you would then read the querystring values in a custom policy and set the body based on values in the querystring (you would no longer need the Set Body policy in this case).

The custom code (inbound policy) might look like this

import { ZuploContext, ZuploRequest } from "@zuplo/runtime";

export default async function (

request: ZuploRequest,

context: ZuploContext,

options: never,

policyName: string

) {

const prompt = request.query.prompt;

const data = {

model: "babbage",

prompt,

max_tokens: 7,

temperature: 0,

};

return new ZuploRequest(request, {

body: JSON.stringify(data),

});

}